A (Proto) Cybernetic Explanation

Kenneth Craik: “an essential quality of neural machinery is that it expresses the capacity to model external events.” [1]

Kenneth Craik: “[...] our brains and minds are part of a continuous causal chain which includes the minds and brains of other men and it is senseless to stop short in tracing the springs of our ordinary, co-operative acts.” 2[2]

Warren McCulloch: “In 1943, Kenneth Craik published his little book called The Nature of Explanation, which I read five times before I realized why Einstein said it was a great book” […] “[Kenneth Craik’s] work has changed the course of British physiological psychology. It is close to cybernetics. Craik thought of our memory as a model of the world with us in it, which we update every tenth of a second for position, every two for velocity, and every three for acceleration as long as we are awake.”[3]

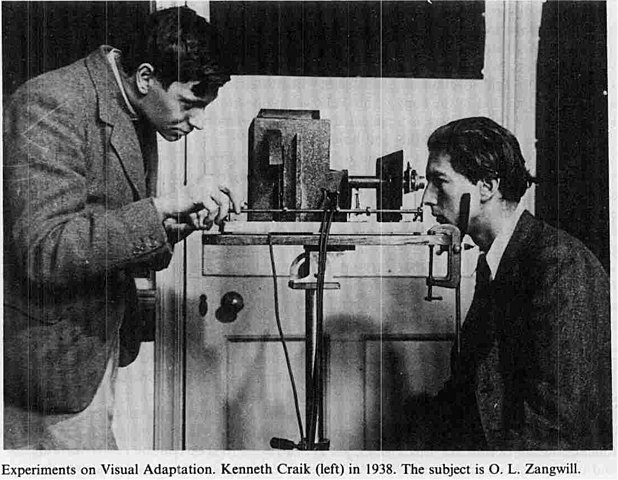

Kenneth Craik at work

Recent assessments of the cybernetic era often stress that the key members of the cybernetic movement were engaged in military research during World War II, and it was in that context that the relevance of feedback and servomechanisms became paramount. It is undoubtedly the case that any mobile servomechanism can be trained to scan and attack a target – just as any cybernetic tortoise or maze running mechanical rat can be fitted with a gun or bomb. The degree to which the cybernetic moment was built on an “ontology of war” cannot be underestimated, however it is equally important to recognise that the builders of the cybernetic devices studied in this text were – before and after the war – physiological psychologists leading in the field of brain research. In this capacity they developed machines which were designed to read and affect the nervous system. The British cyberneticians Kenneth Craik, William Ross Ashby and William Grey Walter were all experimental psychologists, convinced that machines could be built which model the mind and nervous system. Such machines could express mind at its most rudimentary level: as an organism which feeds information through its system and which affords adaptation to its immediate environment.

The cybernetic moment might be seen, therefore, as a meeting of

a.) the contingencies of war with b.) the conception of mind as coextensive to the system it inhabits. It is only after WWII that these two discourses overlap and the principle of a weapon seeking a target is translated to the principle of an organism seeking purpose.

This meeting point was articulated by Kenneth Craik in the early 1940s. The implication arising from the premise that the organism seeking purpose can be modelled by a machine, is that the discourse of the organism is also the discourse of the machine. This conflation did not arrive with the moment of cybernetics, however. Samuel Butler had been amongst those who had recognised the implications of the advent of the “vapour engine” on the discourse of the machine and the discourse of evolution (see chapter two), Alfred Wallis had observed that the operations of the vapour engine and natural selection were principally the same. Long before the advent of cybernetics, in 1930, the experimental behaviourist Clark Hull, a builder of “thinking machines”, maintained: “it should be a matter of no great difficulty to construct parallel inanimate mechanisms… which will genuinely manifest the qualities of intelligence, insight, and purpose, and which will insofar be truly psychic”.[4] The premise of organism-machine equivalence was built into the research cultures of experimental psychology.

The group of three British neuroscientist-cyberneticians, William Ross Ashby, Kenneth Craik and William Gray Walter all researched war-time control systems (aka anti-fire systems - anti-aircraft (AA)systems).3 [5] All three were hands-on engineers and all were in the forefront of experimental brain research. As brain researchers, they sought to extend Pavlov’s conditioned reflex through the experimentation with servomechanisms. Grey Walter and Edward Craik both worked at the conditioned reflex laboratory at Cambridge, where Craik was based, and Craik was a frequent visitor to the Burden Neurological Institute, Bristol, where Grey Walter was the director. Here Craik collaborated with Grey Walter, using the EEG machine which Walter had developed to research the brain’s scanning function in relation to its production of particular brain waves (“alpha waves”). It was Craik who first suggested the relation between scanning and feedback to Grey Walter and encouraged the construction of a servomechanism which would combine the elements of scansion and feedback. This resulted in the Machina Specularix, or cybernetic tortoise (1947), which will be described in some detail soon.

The Nature of Psychology

Kenneth Craik died in a cycling accident in 1945 at the age of 31 and the unfinished The Nature of Psychology (1943) was only partially published years after his death.[6] His philosophical work The Nature of Explanation (1943) was however published in his lifetime, as was his influential paper Theory of the Human Operator in Control Systems (1943). Craik's work anticipated many of the key ideas in relation to negative feedback and purpose which would later be discussed in Norbert Wiener’s Cybernetics and in the Macy conferences on cybernetics in New York (1946-1953).

In 1947, following a visit to the Burden Neurological Institute by Norbert Wiener, Gray Walter noted: “We had a visit yesterday from a Professor Wiener, from Boston. I met him over there last winter and found his views somewhat difficult to absorb, but he represents quite a large group in the States… These people are thinking on very much the same lines as Kenneth Craik did, but with much less sparkle and humour.”[7]

In The Nature of Explanation Craik argues for a hylozoist conception of mind and consciousness, which is to say that there is no distinction between mind and matter. To underline the interconnectedness between mind and matter (hylozoism), Craik argues for a particular conception of causation and for a particular function for the model as a form of imminent analogy. Central to Craik’s argument is that model making is constitutive of mind, that an essential quality of neural machinery is that it expresses the capacity to model external events. [8]

Despite the differences in style identified by Gray Walter, there is remarkable similarity between Wiener and Craik’s respective systems. Because both derived their work on feedback systems from their war-time work on anti-aircraft predictors and because they also both extended their war work to find application to universal principles of organisation, Craik has been referred to as “the English Norbert Wiener”.[9]

In The Mechanism of Human Action (c.1943) Craik first sites 'dynamic equilibrium'[10] as the regulator of both servomechanism and organisms. Here, the “stable state” is regulated by the use of external energy, which is drawn on to correct any instability;11 [11] Craik characterises this expenditure of energy as "down hill".

Craik: “Living organisms were, with few exceptions, the first devices to use a downhill reaction – such as the combustion of carbohydrates – to provide them with a store of energy by which to drive a few uphill reactions for their own benefit. This does not mean, of course, that the living organisms live contrary to the second law of thermodynamics and can prevent the gradual degeneration of energy; it merely means that they have means of storing external energy in potential form for driving some local uphill reaction. If we consider the whole picture, the reaction is, as far as we know always downhill on average; but some parts of it may go uphill by virtue of the energy derived from other parts.” 19 [12]

Here Craik is speaking within the discourse of “dynamic equilibrium” – which, in itself, does not necessitate homeostasis, although homeostasis in a system is an expression of equilibrium. Craik will later introduce negative feedback as the regulator of the mechanism-organanism.

For now, the basic, down to earth, principles for Craik’s mechanism-organism are:

(1) The organism or servomechanism stores energy taken from the outside – this can be food converted to glucose and carried through the system of an organism, or the energy stored in the battery of the servomechanism.

(2) the controlled liberation of energy (which takes advantage of "downhill" when expending energy)

To approach a “common sense” definition of life– requires:

(a) a sensory device for detecting and countering the disturbance,

(b) a computing device for determining the right kind of response,

(c) an effector or motor mechanism for making the response [13]

Craik next considers the role of negative feedback in the organisation of his machine-organism's behaviour and proposes his own adaptive model. His theoretical machine is simply a rod attached to a table which would resist any attempt to push it over. It would contain a 'sensory element' such as a pendulum which would be actuated when the rod is pushed from the vertical. The misalignment controls the motor, "which runs in the appropriate direction until the misalignment has decreased to zero."[14] Craik's hypothetical model demonstrates how negative feedback establishes stability in the organism and machine [15] and that this is in common with any "automatic regulating or stability restoring machine" [16]. Craik goes on to extend this into the biological realm. From a biological point of view negative feedback implies "that it is modifying its behaviour (that is the motion of its motor) as a result of its own previous behaviour ; if that behaviour has restored equilibrium , and thus has been successful, the machine will stop, otherwise it will go on trying."[17]

So far Craik's machine is not capable of "spontaneous" activity - for this to happen positive feedback would have to come into play. Craik introduces the figure of the maze running rat, who is encouraged by negative feedback to avoid the electrified walls of its maze and is forced forward by positive feedback.Craik suggests such exploratory behaviour may be cumulative. Here Craik links positive feedback with a principle of pleasure, which will direct us toward the cybernetic creatures of the future – Grey Walter's Tortoise and Ross Ashby's Homeostat, for example – and to Lacan's fascination with machines which are capable of homeostasis. Craik goes on: "Thus we may suggest that successful and pleasant activities tend, in some way to exert positive feedback and to be cumulative; and although, of course, we do not know where the function of the pleasure easier enters we could make a machine with positive feedback which would continue to perform and increase any activity, by whatever slight random cause it had been initiated, provided it did not endanger the stability and equilibrium of the machine; this would give more appearance of spontaneity.[18]

Craik goes on to explain how a future machine could be regulated by long and short feedback loops, engendering a type of mechanical learning which is analogous to 'response modification' or 'selective learning'. Craik distinguishes these forms of learning from 'sign' learning, which he regarded as the highest form of conditioning. In sign learning "one stimulus comes to suggest the probable presence of another" . [19] The most famous of these stimulus response reactions are Pavlov's dogs, which salivated at the sound of a bell with they associated with food. We will see in the next chapter that it was precisely this response that Grey Walter was trying to engineer in his CORA (COnditioned Reflex Analogue), an adapted version of his Cybernetic Tortoise which Grey Walter demonstrated at the Cybernetic Congress of 1951 in Paris.

Craik points out that machines are able to show more adaptability and "purposiveness' than one is apt to consider possible "from the study of, gramophones, clocks, automatic lathes" which lack feedback.[21] He outlines that more will be learned through continued experimentation and Craik sites two experiments that show the way forward, one by Brader (1937) and the second by Clark Hull (1943)

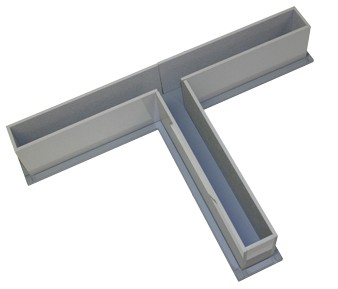

A T-Maze

In Bradner's model a device learns to run to the shorter arm of a T-maze provided it is replaced at the start of the maze immediately after each attempt. Failure to place the machine at the start immediately gives the creature time to "forget" as its response is effected by thermal resistance.Craik describes this as a form of selection: "what really happens is that the machine is arranged to forget, and is given a long time to forget its unsuccessful efforts and very little time to remember its more successful efforts". Bradner's experiments do demonstrate a feedback response, but the repeated placing and replacing of the device breaks any "biological" continuity. However the T-maze device does affirm Craik's instinct that a cumulative information circuit may hold the key to a future behaving and thinking machine. The same is true of Clark Hull's experiment. In Principles of Behaviour[22] Hull gives an account of a conditioned reflex model in which the pressing of a morse key (stimulus) evokes a flashing light (response) which, because of frequent use, "high resistance" is reduced by use due to the frequent passage of current through the circuit.

Edward Craik would not live to see the affirmation of his theories, that adaptation is afforded by long and short feedback loops in a circuit and that the combination of the principles of experimental psychology and WWII research into predictive servo-mechanisms would be married to produce the new discipline of cybernetics. His work and writing presented models for his contemporaries to follow, principally Ross Ashby and Grey Walter, whose Homeostat and cybernetic tortoise would build on his legacy.

The Nature of Explanation

In his philosophical work, The Nature of Explanation (1943) Craik argues for an anti-cartesian, non-vitalistic hylozoism – which is to say that mind is a function of matter, rather than an essence opposing matter. It includes the chapter Hypothesis on the Nature of Thought. The chapter bears aa striking resemblance to Samual Butler's argumentation in Evolution Old and New, in that both Butler and Craik hold the model as centrally important to the evolution and processes of thought. Both agree that the human ability to model dispenses with the need to go through many redundant generations before reaching the optimum design (as does the evolutionary process).Craik points out that the ship, the Queen Mary, is designed with a model in a tank, it would be folly to produce many generations of unseaworthy vestals before arriving at the one that is able to safely cross the Atlantic. But more importantly, a model might have a relation structure to the thing it models, which means it does not resemble the thing it models but parallels its behaviour which is translated into the terms of the original object. Craik gives the example of Kelvin's tide predictor, which bares no physical resemblance to the tides it predicts. The tide predictor is a series of pulleys which reproduce the oscillations at frequencies which resemble the variation in tide level at a give spot in essential respects. [23] Craik takes this idea of 'relation structure' and applies it to Clark Hull's stimulus-response experiments, which bare a relation structure in essential respects to the things it models (in this case synaptic response to stimulus, the capacity to remember).[24]

Craik points out that we must adopt a very specific understanding of "analogy" when we make these parallels between the physical world and the devices that model it. "[T]he telephone exchange may resemble the nervous system in just the way I find important; but the central point is the principle underlining the similarity"[25]

One could inventory the countless instances of when a model is not like the thing it parallels, what is significant is the principle which underlines the similarities; which is their tendency to organise stimulus into symbolic order. Why, Craik asks, does this tendency to make analogy actually exist? Humans have a tendency to see simple rules (relation structures) operating within complex systems because this reflects the structure of reality. Indeed, Craik goes not to suggest, we might consider that our brain models the world we inhabit because our brain parallels the systems that bring it into consciousness. [26] "The emergence of common principles and similarities is not so surprising if it is shown that all substance is composed of similar ultimate units..."[27]

For Craik, “Significance”, (signification) is an essential element of human experience. Significance is the relatedness of things. For Craik, “all propositions carry as it were the right to apply to something objectively real” .24[28] Once one accepts the possibility of symbolism one must accept that symbols can represent alternatives, which experiment decides between, in this sense experiment becomes the arbiter of what is the case. In this way justification of causality must be its trial by experiment23[29] Craik draws of the experimental hypothesis of Hermholtz, and supports the idea of “causal interaction in nature”. Admittedly, one cannot trace causation back to a first cause, but this is not a sufficient reason to doubt cause as such (as did Berkley and Hume). The model works in the same way as the thing it parallels, and in so doing it goes through the three phases of: translation, inference and re-translation.

1) translation of external process into symbols

2) inference, which is the arrival at other symbols through reasoned deduction

3) re-translation into external process (such as building and predicting).

Some machines demonstrate this triadic ability, such as anti-aircraft predictors25 and calculating machines26 Craik again cites Clark Hull’s models, which respond to altered systems; from here Craik posits that an essential quality of neural machinery is that it expresses the capacity to model external events. The principle underlining the similarity of these machines is more important than superficial analogy. The brain works like a telephone exchange, sure, but the brain’s similarity to the telephone’s switching mechanism is one way to understand the operations of mind. The structural principle underlining the similarities is Craik’s triad of translation, inference and re-translation.,[30]27 Craik applies this triad to brain function as

1) the translation of external events into neural patterns through the stimulation of the sense organs

2) the interaction and stimulation of other neural patterns (association)

3) the excitation of these effectors or motor organs.

Craik notes that to signify – to make a model in your head of something which could be actualized – has a close relation to entropy because signification involves the conservation of energy – it adds to the store of “down hill” actions. For Craik his own scheme “[…] would be a hylozoist rather than a materialistic scheme. It would attribute consciousness and conscious organisation to matter whenever it is physically organised in certain ways.” The machines Craik cites are an expression of mind, and expression of an ordering structure.

"My hypothesis then is that thought models, or parallels, reality..." [56, 57, 58]

This notion of thinking would be adopted by fellow British cyberneticians. When Ashby demonstrated the self-regulating machine the homeostat at the Macy conferences on cybernetics, he would describe it in Craikian terms, a position that would cause some consternation amongst the delegates (see Homeostat).

Craik further develops the notion of the model with a very specific reading of the function of metaphor. Things, in their coming into being, mime the structures that run through reality. This is not to say that reality is an inert exterior object which is given significance (code is not written into or onto reality), but rather reality is emergent within the process of signifying itself. One makes a distinction and the difference establishes a relation. Thought, in the action of modelling, does not copy reality because “our internal model of reality can predict events which have nor occurred” This predictive ability “saves time, expense and even life”28[31] the ability to predict is “down hill” all the way – it is negentropic. It begins with the functions of homeostasis in the organism and extends to conscious purpose – this is the position adopted by British cyberneticians and endorsed by Gregory Bateson and Warren McCulloch.

The modelling of reality is part of a larger system, which introspective psychology and analytical philosophy have been unable to address. The degree of organisation which goes on at a non-conscious level is however evidenced in neuropathological experiments, in which purposive activity is highlighted. We generally pass over such activity as natural because greater mechanical complexity often leads to greater simplicity and coordination of performance, introspective psychology and analytical philosophy pays little attention to them as constitutive of thought (we will see in chapter * that Gregory Bateson and Warren McCulloch’s cybernetic critique of Freudianism was established on this premise).

For Craik the processes of reasoning are fundamentally no different from the mechanisms of physical nature itself. Neural mechanisms parallel the behaviour and interaction of physical objects, such processes are suited to imitating the objective reality they are part of, this is in order to and provide information which is not directly observable to it (to predict and to model).29,[32] Craik compares the performance of an aeroplane compared with a pile of stones. The aeroplane is more complicated but its performance is more unified. If the parts of the aeroplane had been dropped into a bucket the atomic complexity would be high but the simplicity of performance would be nil.30[33] Once the plane is built, however, there is an atomic and relational complexity which increases the possibilities of performance. The mind, in modelling reality, takes clues in perception – there is no obligation to decide whether such clues are conscious interpretations or automatic responses to reality which express atomic and relational complexity. For Craik “all perceptual and thinking processes are continuous with the workings of the external world and of the nervous system.” and there is no hard and fast line between involuntary actions and conscious thought. Craik now approaches a position close to Bateson’s, stressing the “continuity of man and his environment” […] “...our brains and minds are part of a continuous causal chain which includes the minds and brains of other men, and it is senseless to stop short in tracing the springs of our ordinary, co-operative acts” and again Craik stresses that “man is part of a causally connected universe and his actions are part of the continuous interaction taking place.” 31[34]

The process of experimentation, and the operations of servo-mechanisms, are important for this generation of philosopher-engineers because experimentation serves as the arbitrator of the model proposed by the processes of mind, and also because servomechanisms operate at the point where significance emerges. They provide models for a future stage of development, the conscious machine. This is the future that Samuel Butler modelled from the vapour engine and the future that Grey Walter would go on to model in the cybernetic tortoise.

Experimentation with servomechanisms is, for Craik, Ashby and Grey Walter, an expression of the more complex structures that underly them. In this respect Ross Ashby’s homeostat and Grey Walter’s tortoise are a result of the discourse of Kenneth Craik and of a particularly experimental, hylozoist approach to neuroscience and behaviour. This involved rigorous mathematical and philosophical theorisation alongside the construction of devices in the metal, alongside the analysis of what these machines express. If these creatures think, one might ask, how do they think? Perhaps, the machines themselves suggest – in their unconscious, indifferent relation to us, in their blind insistence on passing through our space, making demands on our matter – that we don’t actually think in the way we think we think. 32[35] Edward Craik left the path open for his colleagues Ross Ashby and Gray Walter to build actual performative models which challenged fundamentally what we think thinking is.

- ↑ E. Craik, The Nature of Explanation (1943)

- ↑ K. Craik, The Nature of Explanation (1943)

- ↑ Warren S. McCulloch, The Beginning of Cybernetics, Macy Conferences on Cybernetics, Vol. 2 p 345

- ↑ Clark Hull

- ↑ Kenneth Craik’s wartime research included researching combatants’ performance in a cockpit and night vision. This experience led him to understand that the pilot in a cockpit behaves like a servo mechanism. W. Grey Walter worked on radar and control systems, as did Ashby.

- ↑ Kenneth J. Craik, The Nature Of Psychology, a Selection of Papers, Essays and Other Writings, Stephen L.Sherwood ed. 1966, with Introduction by Warren McCulloch, Leo Verbeek and Stehen L. Sherwood.

- ↑ cite

- ↑ Craik The Nature of Explanation (1943)

- ↑ A. Pickering The Cybernetic Brain

- ↑ Craik makes reference to Herbert Spencer and Pavlov in respect to the term "dynamic equilibrium"

- ↑ The Nature of Psychologyp.13

- ↑ E. Craik, The mechanism of Human Action, The Nature of Psychology (1943) p14

- ↑ Craik The Nature of Psychology (1943) Cambridge p14

- ↑ E. CraikThe Nature of Psychologyp.16

- ↑ The Nature of Psychologyp.15-16

- ↑ p16

- ↑ E. CraikThe Nature of Psychologyp.16 Note:If Craik's model is meant to do as little as possible, to simply establish its ability to restore itself to maximum degree of stability (inaction), it is very similar in principle to Ross Ashby's Homeostat. See Steps to a (media) Ecology. Craik's rod is also an illustration of Lacan's reading of the death drive in Freud's Beyond the Pleasure Principle- see Jacques Lacan – The Tortoise and Homeostasis

- ↑ Craik The Mechanisms of Human Action in The Nature of Psychology (1943)p 17

- ↑ Craik The Mechanisms of Human Action in The Nature of Psychology (1943)p18

- ↑ To assist the conceptualisation of a learning machine Craik draws on the American behaviourist Clark Hull’s “conditioned reflex models”. Clark Hull had compiled a series of “idea books” (1929-52) in which he devised mechanical and electro-chemical automata which worked on the Pavlovian principle of trial and error conditioning (Pavlov’s Conditioned Reflexes was published in English translation in 1927). Hull also derived his own mathematical system to describe the operations of these automata and wanted to apply the rules of the conditioned reflex as computational units within his machines. Hull aimed to build a conditioned reflex machine that would demonstrate intelligence through contact with its environment. The devises were subjected to a “combination of excitory and inhibitory procedures and would exhibit an array of known conditioning phenomena”. Meaning, the machines would “learn” to overcome obstacles in order to achieve a particular goal. Hull methodically analysed how instances of purpose and insight could be derived from the simple interaction of elementarily conditioned habits. “I feel” stated Hull, “that all forms of action, including the highest forms of intelligent and reflective action and thought, can be handled from purely materialistic and mechanistic standpoints.”Hull in David E. Leary, Metaphors in the History of Psychology, Cambridge, 1990

- ↑ Craik The Mechanisms of Human Action in The Nature of Psychology (1943)p20

- ↑ C. L. Hull Principles of Behaviour (1943), New York: Appelton-Century-Crofts, p 27

- ↑ Nature of Explanation p52

- ↑ Craik describes the nervous system as organised stochastically, the signals ‘take the path of least resistance’. This process works like the telephone exchange, says Craik, he also likens the human brain to a computer (the model being Douglas Hartree’s differential analyser (1934)). These are analogies but they are physical working models which “work in the same way as the process it parallels” the machine does not have to physically resemble the thing it parallels “it works in the same way in certain essential respects” P 53

- ↑ Craik, Nature of Explanation, p53

- ↑ p55

- ↑ p54

- ↑ Craik, The Nature of Explanation (1943) Cambridge, 94

- ↑ Craik The Nature of Explanation (1943) Cambridge, 46

- ↑ .Craik The Nature of Explanation (1943) Cambridge p51

- ↑ Craik The Nature of Explanation, 82

- ↑ Craik The Nature of Explanation p99

- ↑ Craik The Nature of Explanation 84-85

- ↑ Craik The Nature of Explanation 87-88 NOTE: Craik’s wartime research included researching combatants’ performance in a cockpit and night vision. W. Grey Walter worked on radar and control systems, as did Ashby.

- ↑ Craik, IX-XI Note: This experimental epistemology is in line with the work of Warren McCulloch – who co-wrote the introduction to the belated publication of a selection of Craik’s papers and essays in 1966